Stop guessing

your LLM costs.

Track every API call, monitor costs in real-time, and get alerts before your budget runs out. One line of code.

npm install costrace

// integrations

// features

Everything you need to

control LLM costs

Real-time Cost Tracking

Track every API call across OpenAI, Anthropic, and Gemini. See costs broken down by model, team, and project with zero configuration.

$1.4K

tracked this month

Latency Monitoring

Monitor response times across all your LLM providers. Identify slow endpoints and optimize your AI pipeline performance.

142ms

avg response time

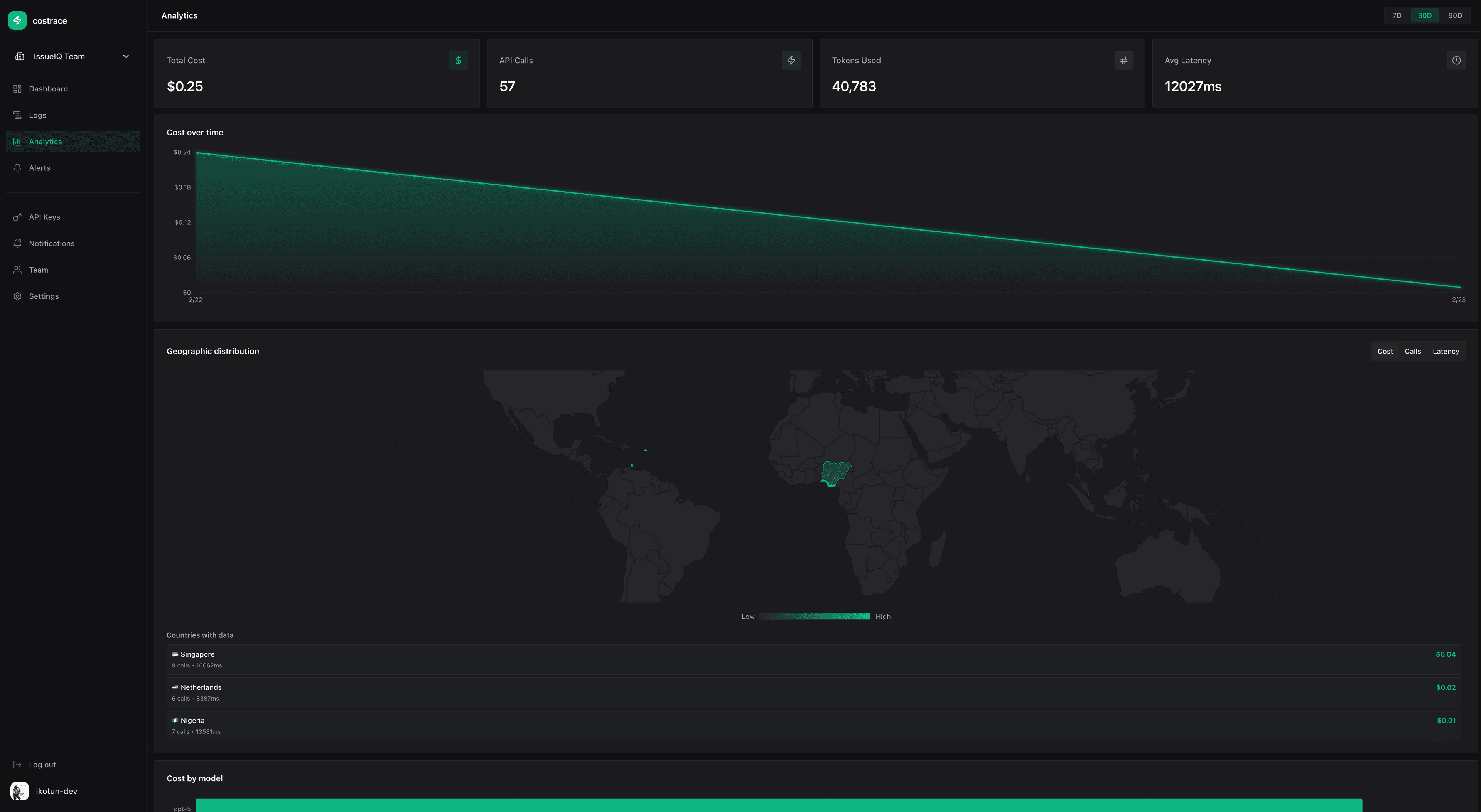

Geographic Insights

See where your API calls originate. Understand geographic distribution patterns and optimize for latency and compliance.

4

regions tracked

// setup

Two lines of code.

That's the entire setup.

Install the SDK

Add Costrace to your project with a single command. (This sample is for the python sdk)

uv add costrace-sdkbashInitialize with one line

Pass your API key and you're ready to go.

costrace.init(api_key="ct_...")pythonView your dashboard

Traces appear automatically as you call OpenAI, Anthropic, or Gemini.

# That's it. No other config.python// integration

One line. Every provider.

Add Costrace to your project and every LLM API call is tracked automatically. No wrappers, no config files.

import costrace

from openai import OpenAI

costrace.init(api_key="ct_your_api_key")

# Use OpenAI as you normally would

client = OpenAI()

response = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": "Hello!"}]

)

# Cost, latency, and tokens tracked automatically// pricing

Start free. Scale when ready.

Free

For side projects and experimentation

Pro

For teams shipping AI to production